Facial recognition is rapidly growing as a reliable method of identification in various fields. On the surface, it looks like a foolproof and secure way of profiling, but that is far from reality. A team of researchers in their latest development have shown how they can trick modern facial recognition algorithms into seeing someone who isn’t there.

We use facial recognition a lot in our daily lives. Many modern phones unlock using facial recognition. It has a significant role in cybersecurity. Similarly, it also plays a crucial part in identification at modern airports. These are just a few widespread uses of this technology, and the list grows every day.

For this research, a cybersecurity team from McAfee set up an attack on the facial recognition system. This system is close to what most airports use for identification and passport verification.

They used machine learning to create an image that looked like the same person to humans. But the computer identified it as somebody else. In other words, this is tricking the algorithm into letting someone board a flight who is a potential threat and is on the no-fly list.

Steve Povolny is the lead author of this study. He said that “If we go in front of a live camera that is using facial recognition. To identify and interpret who they’re looking at and compare that to a passport photo. We can realistically and repeatedly cause that kind of targeted misclassification,”

Also read: Neuralink will allow you to stream music directly to your brain

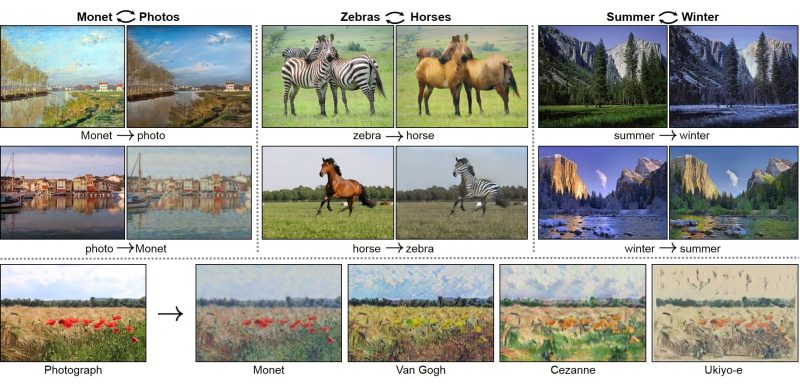

The researchers used an image translation algorithms called CycleGAN to confuse the facial recognition software. CycleGAN is used for morphing pictures from one type to another. With the help of this algorithm, you can do various functions such as transforming a horse to a zebra, making images look like they were taken in a different season, or even change a photograph to painting in the style of a famous painter. Here is an example of how it works:

The Process:

The team from McAfee used 1500 photos of the two lead researchers in the project and utilized CycleGAN to morph them into each other. Meanwhile, they used a facial recognition algorithm to recognize the person in theses generated images.

Consequently, after a large number of photos, they obtained a copy from CycleGan. They looked like one person but was similar enough to fool facial recognition into thinking it was the other one.

Although this research brings several questions about modern security relying on facial recognition, there is a reason no bod has thought of doing this yet. Primarily the research team used an open-source facial recognition algorithm. Because they could not get their hands on the actual software which our security organizations use at airports and other places.

Steve Povolny said that this is why no one will try this method. No one can get access to the exact system in place at a secure location. However, thee are a lot of similarities in facial recognition algorithms. He seemed pretty confident that their method will work on an airport or any other system. Another thing to keep in mind is that AI models like CycleGAN need powerful computers and expertise to train and execute.

Also Read: GPT-3 an advanced AI algorithm

The researcher from McAfee explained that their main goal is the demonstration of weaknesses present in AI systems. By doing so, they will take the role of humans more significant and essential. In words of Povolny:

“AI and facial recognition are incredibly powerful tools to assist in the pipeline of identifying and authorizing people. But when you just take them and blindly replace an existing system that relies entirely on a human, then you have introduced maybe a greater weakness than you had before.”

Do you think that we should continue to rely on Facial Recognition algorithms entirely or do we need to rethink our strategy? Let us know down in the comments below!