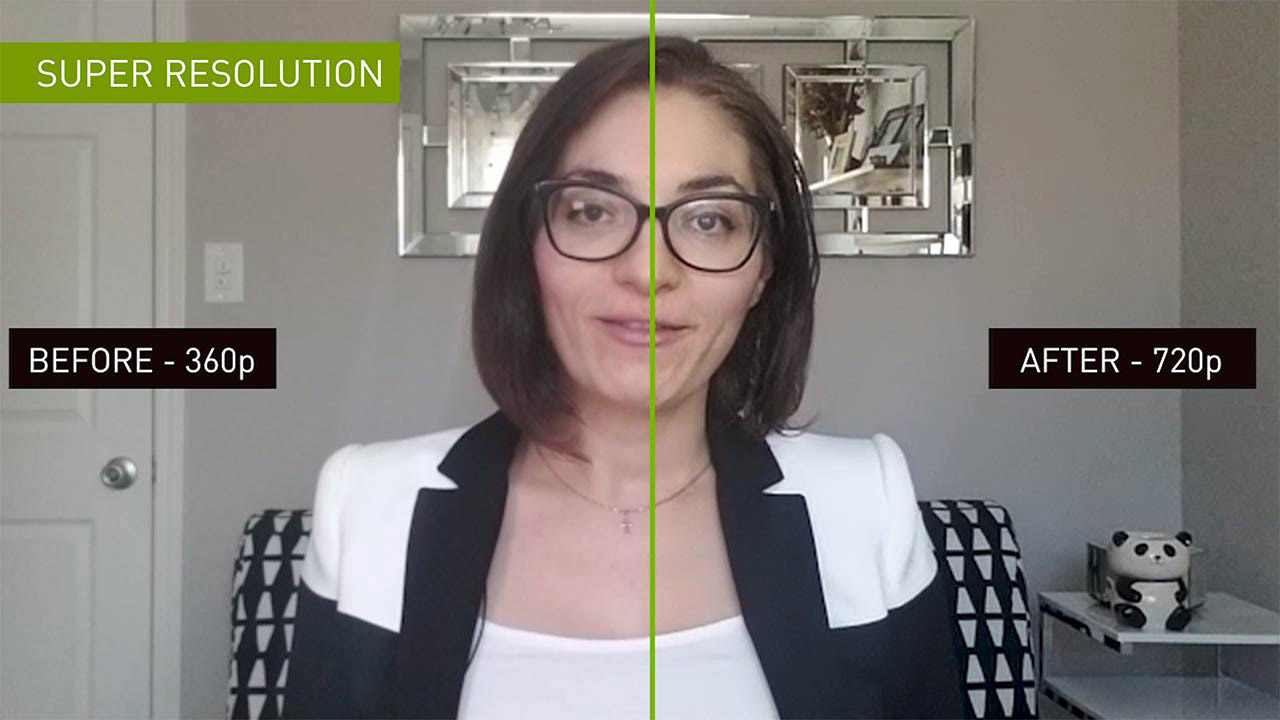

In the launch event for the Ampere series of their gaming cards NVIDIA also showed some exiting AI projects, they were working on. While we saw some of them in action inside their DLSS algorithm for video games. However, in recent news, we see what NVIDIA has achieved in terms of compression algorithms using AI. Their solution will significantly reduce video size while maintaining exceptional quality.

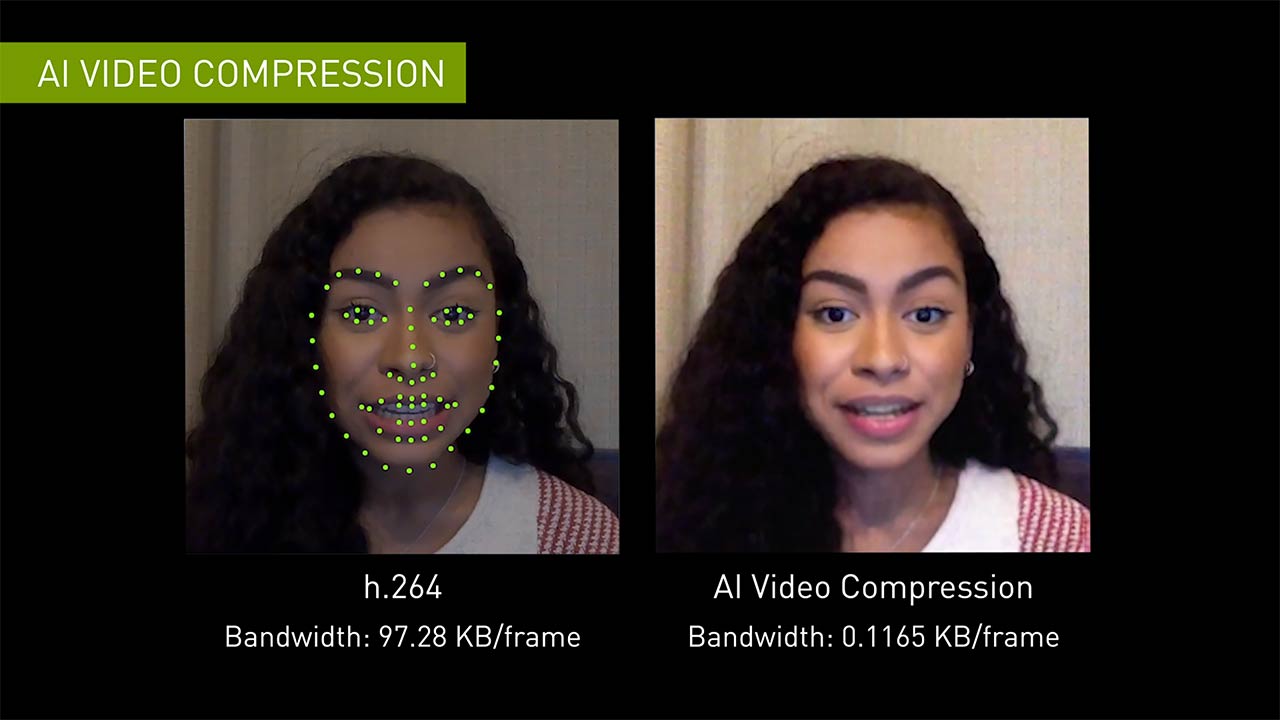

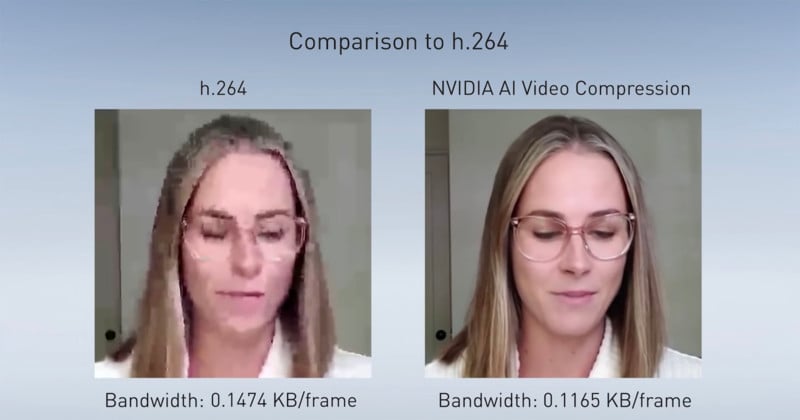

Interestingly NVIDIA has achieved these results by replacing the conventional h.264 video codec with a neural network. It looks like they have managed to reduce the required bandwidth for video calls by order of magnitudes. They showed an example where the algorithm was able to reduce required data from 97.28 KB/frame to a mere 0.1165 KB/frame. a whopping reduction to 0.1 percent of the original value.

Now you would think that this will take a lot of computation and will be extremely complex. However, the working principle behind this algorithm is extremely straightforward. Conventionally our video conferencing software compresses the video frames using h.264 encoding. This method is extremely data-heavy. This new method replaces video frame data with neural data from the algorithm.

With the new AI-based model the sender side sends a reference image of the caller. What happens next is a little different from our conventional way. Instead of sending the next frames the computer then sends specific reference points on the image around the eyes, nose, and mouth.

The receiver side then implements a GAN (generative adversarial network) which a specialized neural network. This system takes the reference image and combines it with key points to reconstruct the sent image. Since the key points are much smaller than actual image pixels this method requires much less data bandwidth. Consequently, you can have an extremely slow internet connection and this new system will still give a great video call experience.

Further Examples:

The team of researchers working on this development demonstrated how a fast internet connection gives similar results in both methods. It is in low bandwidth situations when the new neural network gets to spread its wings. They released some pretty astonishing results that look too good to be actually true. The neural network produces clear and artifact-free images even in extremely low bandwidth situations. It even works when the person in the video is wearing a mask, glasses, or headphones.

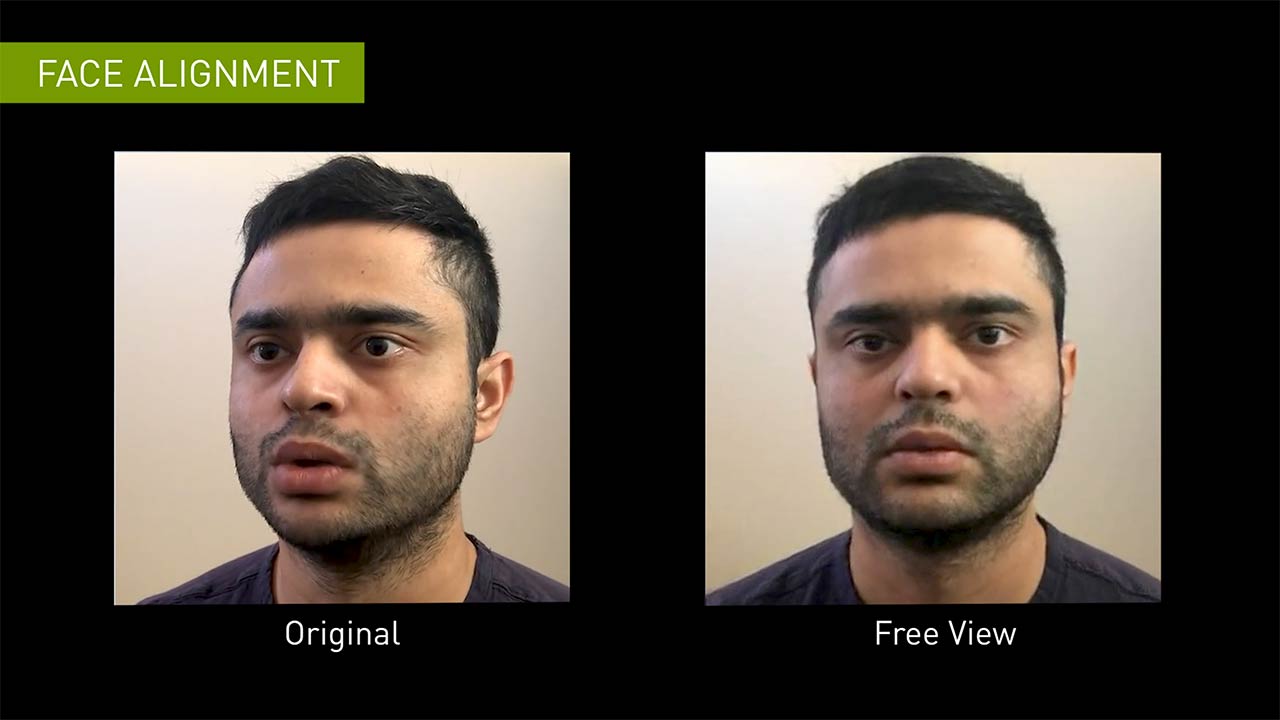

This technology will not only improve video quality, it will improve the whole video call experience. with a lesser amount of data used for video, data software can focus on audio and can make it less cutting and glitchy. This however is not the limit. because of the flexible nature of this system. Users can even change camera angles using this software. They could be looking sideways and the software will construct the image to appear like they are looking directly at the screen.

NVIDIA calls this specific feature “Free view” this will fix a lot of perspective issues for people with side cameras. Not only that it will also make their video more immersive. similar to this application NVIDIA can also animate your actions. Since the algorithm works by tracking focus points it can easily animate the facial expressions of your avatar.

Lastly, there is one security issue linked to this technology. We all are aware of what deepfakes are and how they work. Because of the specific working principle of this application. There are few questions on how it can be deployed and lead to possible issues with “deep fakes”. Deepfakes are getting better and better with every development and NVIDIA needs to find security measures to rectify that if they plan on using this technique. You can learn more about this new development here.